Semantic segmentation is the task of clustering parts of an image together which belong to the same object class. It is a form of pixel-level prediction because each pixel in an image is classified according to a category. In this project, I have performed semantic segmentation on Semantic Drone Dataset by using transfer learning on a VGG-16 backbone (trained on ImageNet) based UNet CNN model. In order to artificially increase the amount of data and avoid overfitting, I preferred using data augmentation on the training set. The model performed well, and achieved ~87% dice coefficient on the validation set.

|

|

|

|

|

|---|

|

|

|

|

|

|

|---|

The Jupyter Notebook can be accessed from here.

Semantic segmentation is the task of classifying each and very pixel in an image into a class as shown in the image below. Here we can see that all persons are red, the road is purple, the vehicles are blue, street signs are yellow etc.

Semantic segmentation is different from instance segmentation which is that different objects of the same class will have different labels as in person1, person2 and hence different colours.

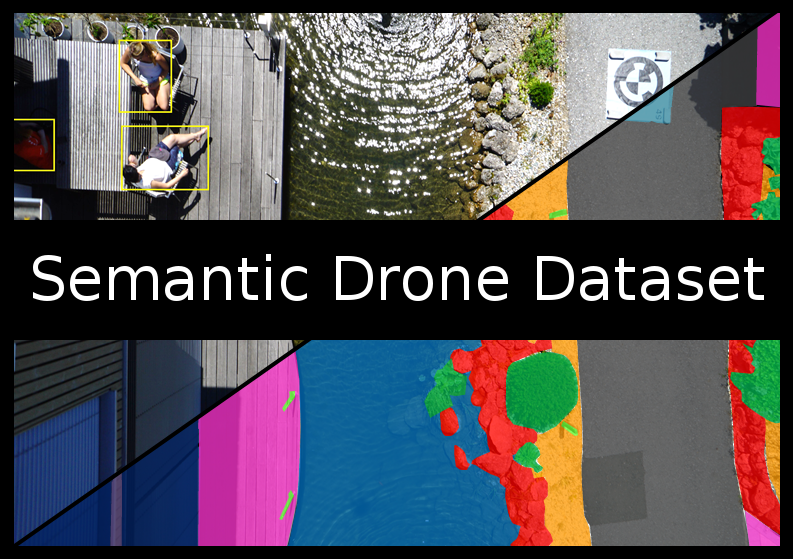

The Semantic Drone Dataset focuses on semantic understanding of urban scenes for increasing the safety of autonomous drone flight and landing procedures. The imagery depicts more than 20 houses from nadir (bird's eye) view acquired at an altitude of 5 to 30 meters above ground. A high resolution camera was used to acquire images at a size of 6000x4000px (24Mpx). The training set contains 400 publicly available images and the test set is made up of 200 private images.

The images are labeled densely using polygons and contain the following 24 classes:

| Name | R | G | B | Color |

|---|---|---|---|---|

| unlabeled | 0 | 0 | 0 | |

| paved-area | 128 | 64 | 128 | |

| dirt | 130 | 76 | 0 | |

| grass | 0 | 102 | 0 | |

| gravel | 112 | 103 | 87 | |

| water | 28 | 42 | 168 | |

| rocks | 48 | 41 | 30 | |

| pool | 0 | 50 | 89 | |

| vegetation | 107 | 142 | 35 | |

| roof | 70 | 70 | 70 | |

| wall | 102 | 102 | 156 | |

| window | 254 | 228 | 12 | |

| door | 254 | 148 | 12 | |

| fence | 190 | 153 | 153 | |

| fence-pole | 153 | 153 | 153 | |

| person | 255 | 22 | 0 | |

| dog | 102 | 51 | 0 | |

| car | 9 | 143 | 150 | |

| bicycle | 119 | 11 | 32 | |

| tree | 51 | 51 | 0 | |

| bald-tree | 190 | 250 | 190 | |

| ar-marker | 112 | 150 | 146 | |

| obstacle | 2 | 135 | 115 | |

| conflicting | 255 | 0 | 0 |

Albumentations is a Python library for fast and flexible image augmentations. Albumentations efficiently implements a rich variety of image transform operations that are optimized for performance, and does so while providing a concise, yet powerful image augmentation interface for different computer vision tasks, including object classification, segmentation, and detection.

There are only 400 images in the dataset, out of which I have used 320 images (80%) for training set and remaining 80 images (20%) for validation set. It is a relatively small amount of data, in order to artificially increase the amount of data and avoid overfitting, I preferred using data augmentation. By doing so I have increased the training data upto 5 times. So, the total number of images in the training set is 1600, and 80 images in the validation set, after data augmentation.

Data augmentation is achieved through the following techniques:

- Random Cropping

- Horizontal Flipping

- Vertical Flipping

- Rotation

- Random Brightness & Contrast

- Contrast Limited Adaptive Histogram Equalization (CLAHE)

- Grid Distortion

- Optical Distortion

Here are some sample augmented images and masks of the dataset:

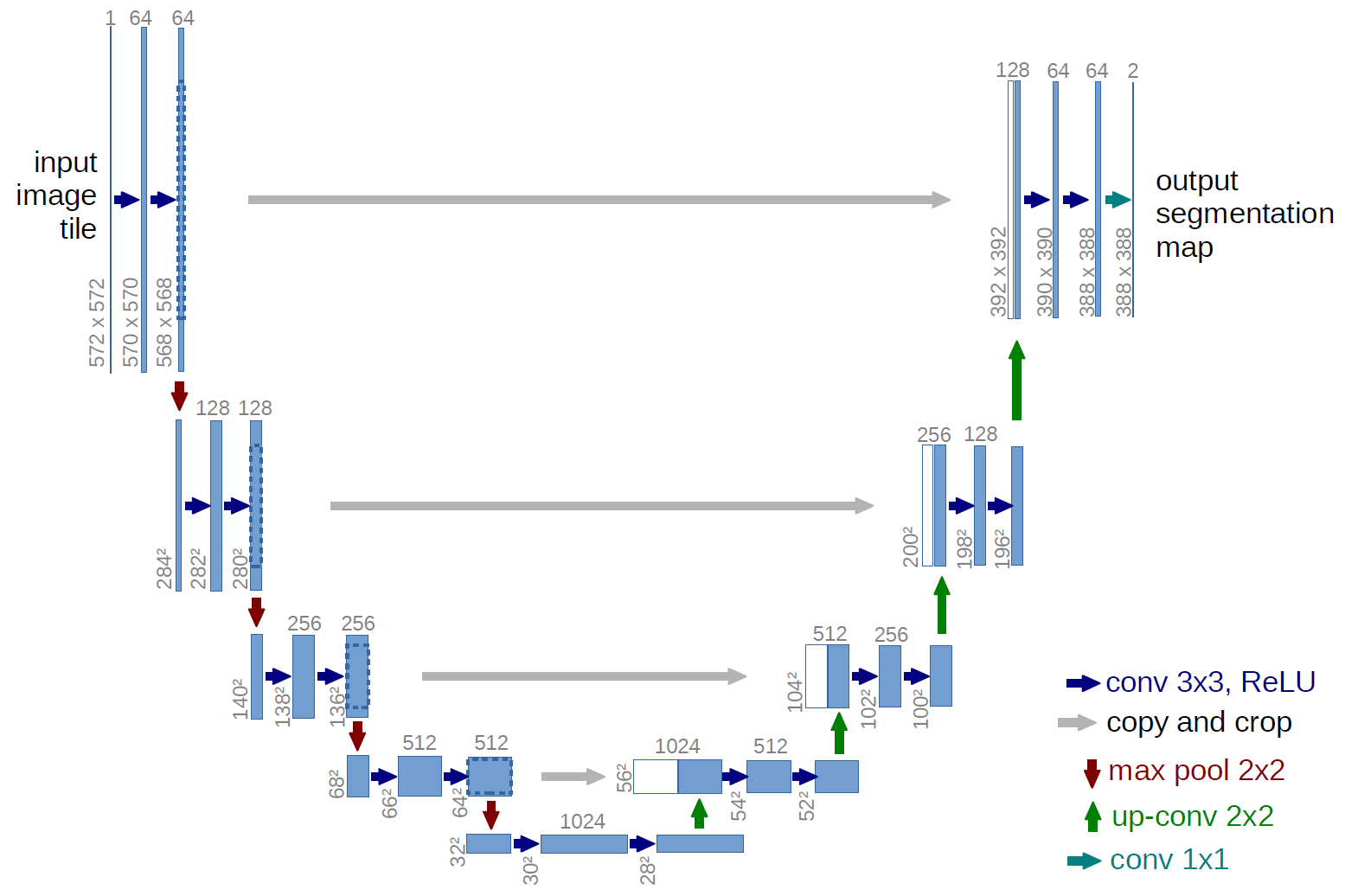

The UNet was developed by Olaf Ronneberger et al. for Bio Medical Image Segmentation. The architecture contains two paths. First path is the contraction path (also called as the encoder) which is used to capture the context in the image. The encoder is just a traditional stack of convolutional and max pooling layers. The second path is the symmetric expanding path (also called as the decoder) which is used to enable precise localization using transposed convolutions. Thus, it is an end-to-end fully convolutional network (FCN), i.e. it only contains Convolutional layers and does not contain any Dense layer because of which it can accept image of any size.

In the original paper, the UNet is described as follows:

U-Net architecture (example for 32x32 pixels in the lowest resolution). Each blue box corresponds to a multi-channel feature map. The number of channels is denoted on top of the box. The x-y-size is provided at the lower left edge of the box. White boxes represent copied feature maps. The arrows denote the different operations.

-

VGG16 model pre-trained on the ImageNet dataset has been used as an Encoder network.

-

A Decoder network has been extended from the last layer of the pre-trained model, and it is concatenated to the consecutive convolution blocks.

VGG16 Encoder based UNet CNN Architecture

A detailed layout of the model is available here.

- Batch Size = 8

- Steps per Epoch = 200.0

- Validation Steps = 10.0

- Input Shape = (512, 512, 3)

- Initial Learning Rate = 0.0001 (with Exponential Decay LearningRateScheduler callback)

- Number of Epochs = 20 (with ModelCheckpoint & EarlyStopping callback)

| Model | Epochs | Train Dice Coefficient | Train Loss | Val Dice Coefficient | Val Loss | Max. (Initial) LR | Min. LR | Total Training Time |

|---|---|---|---|---|---|---|---|---|

| VGG16-UNet | 20 (best weights at 18th epoch) | 0.8781 | 0.2599 | 0.8702 | 0.29959 | 1.000 × 10-4 | 1.122 × 10-5 | 23569 s (06:32:49) |

The model_training_csv.log file contain epoch wise training details of the model.

Predictions on Validation Set Images:

All predictions on the validation set are available in the predictions directory.

Activations/Outputs of some layers of the model-

Some more activation maps are available in the activations directory.

- Semantic Drone Dataset- http://dronedataset.icg.tugraz.at/

- Karen Simonyan and Andrew Zisserman, "Very Deep Convolutional Networks for Large-Scale Image Recognition", arXiv:1409.1556, 2014. [PDF]

- Olaf Ronneberger, Philipp Fischer and Thomas Brox, "U-Net: Convolutional Networks for Biomedical Image Segmentation", arXiv:1505. 04597, 2015. [PDF]

- Towards Data Science- Understanding Semantic Segmentation with UNET, by Harshall Lamba

- Keract by Philippe Rémy (@github/philipperemy) used under the IT License Copyright (c) 2019.